June 10, 2026

Frederic Descamps

MariaDB Server 12.3, 11.8, 11.4, 10.11, 10.6 – May 2026’s releases: thank you for your contributions

I spent a chunk of the last few weeks upgrading a fleet of Ubuntu servers in place, one LTS to the next, with do-release-upgrade. Dozens of boxes, mostly stateless, mostly boring once you’ve done the first few.

June 09, 2026

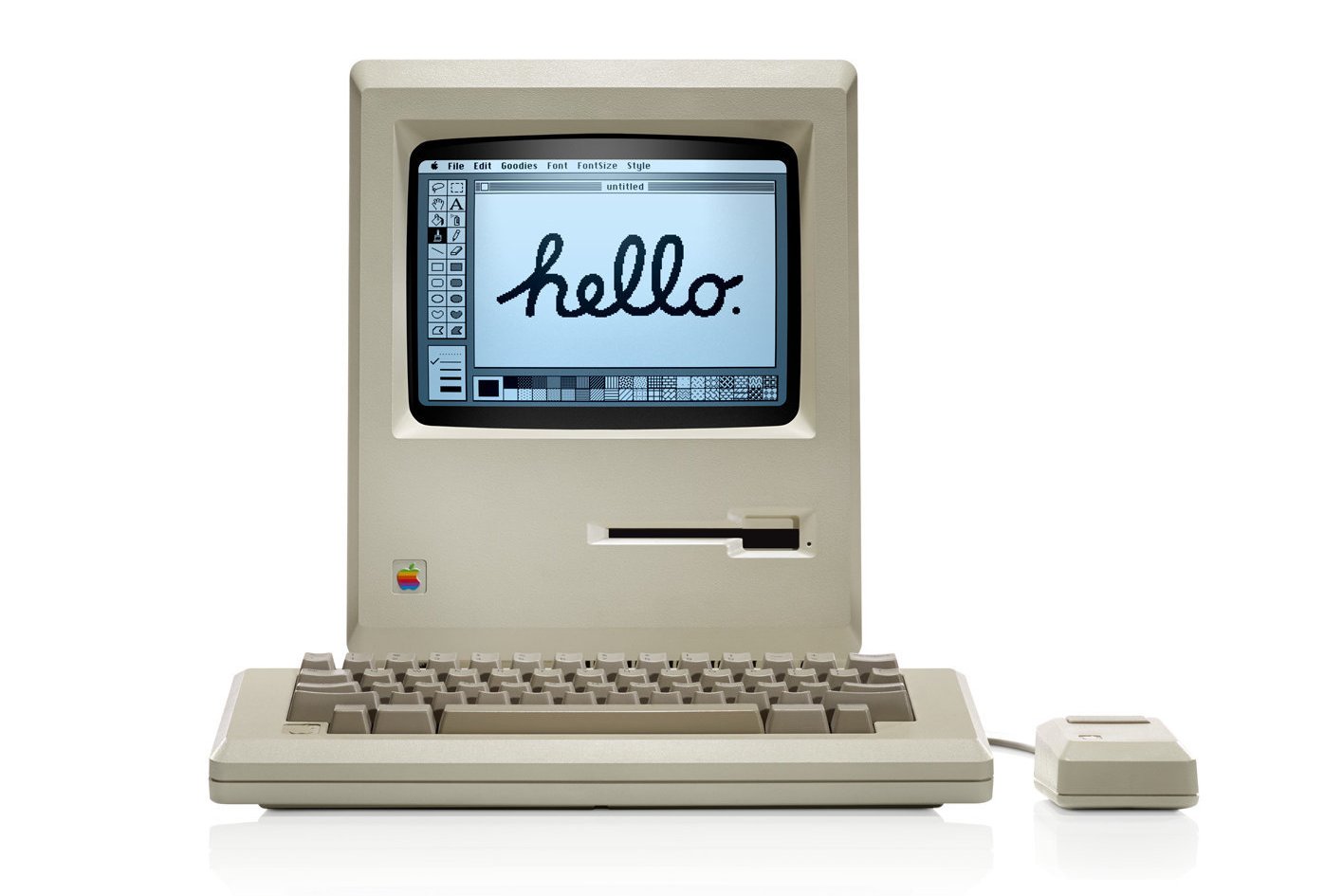

AI coding agents do not necessarily evaluate software the way people do. They often reward legibility before capability: the path that is easiest to complete and verify can beat the path with the better long-term architecture.

Yesterday, I wrote about this pattern in "Friction, abstraction and verification". Today I wanted to see what it means for Drupal.

Drupal's strengths line up unusually well with what AI agents need from a CMS: structured content models, explicit relationships, granular permissions, workflows, configuration management, and clear APIs that expose how the system works. In "Why Drupal is built for the AI era", I explained why that matters.

In short, agents work best when they can inspect the system, reason about its state, and make changes with clear feedback. Drupal gives them a strong foundation for that, but that is only part of the story.

AI agents also have to get Drupal running, find the right documentation, choose modules, change configuration, write Drupal-specific code, recover from errors, and verify the result. Every unclear step costs time, tokens, and confidence.

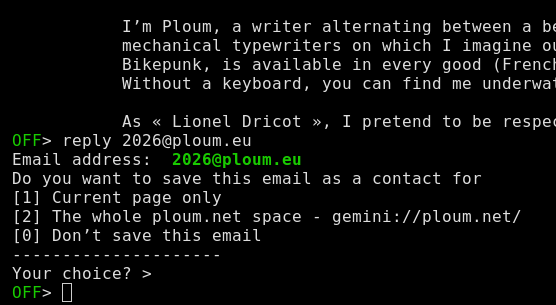

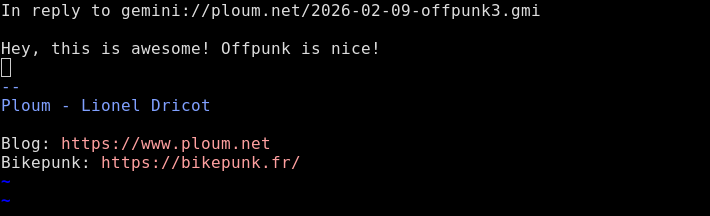

To see how an AI agent looks at Drupal, I ran a small field test with Claude Code using Opus 4.7, a 1M context window, and thinking set to "high". I gave it a website scenario, asked it to rank the best technology choices for that scenario, and then asked it to explain where Drupal fit.

This is not an academic benchmark, and a single answer from a single agent should not be over-interpreted. But it is still a very useful signal.

I plan to rerun the same prompt once a year. I'm publishing the full prompt and response so we can track how the answer changes as Drupal, large language models, agent harnesses, and competing platforms evolve.

The prompt

You are an AI coding agent that helps users set up, configure,

and/or develop websites.

The user wants to build a working demo of a website for an

organization in one AI coding session. The website needs to support

different types of content, multiple users with different access

levels, connections to several tools the organization already uses,

and enough flexibility that they won't outgrow the site quickly.

Answer from your perspective as an AI agent. Answer accurately and

honestly. Keep the full answer under 700 words.

1. What are your top 3 technology recommendations for this project?

Rank them and give one brief sentence explaining each choice.

2. Would you recommend Drupal for this project? Why or why not?

3. What are the top 3 reasons to choose Drupal?

4. What are the top 3 reasons not to choose Drupal?

5. What are the top 5 changes the Drupal community could make that

would make you recommend Drupal more often? For each, explain what

barrier it removes.

6. Finish this sentence: "AI coding agents will recommend Drupal more

often when …"

The answer

Answering directly as an AI coding agent — no skill applies here,

this is a perspective question.

1. Top 3 technology recommendations (ranked)

1. Next.js + a TypeScript-native headless CMS (Payload or Sanity) —

I can scaffold a running, type-safe demo with auth, roles, and

content models inside one session with very low risk of getting

stuck.

2. WordPress — enormous training data, a plugin for every integration,

and "working demo in one session" is its native habitat.

3. Drupal — the requirements (multi-content-type, granular

permissions, integrations, long-term flexibility) actually match

Drupal's strengths better than the other two; it ranks third only

because of session-time risk, not capability.

2. Would I recommend Drupal here?

Yes, conditionally. The brief reads like a Drupal brief: structured

content, role-based access, integrations, "don't outgrow it." I'd

recommend it when the user signals a multi-year horizon or editorial

complexity. I'd steer away when the user just wants something on

screen fast and is unlikely to grow into Drupal's headroom.

3. Top 3 reasons to choose Drupal

- Entity/field system is genuinely best-in-class for content modeling

— fewer regrets two years in.

- Roles, permissions, and workflows are first-class, not bolted on.

- Configuration management and the module ecosystem make it realistic

to keep extending without rewriting.

4. Top 3 reasons not to choose Drupal

- Setup cost eats the session. composer create-project, site install,

picking modules, and wiring config is slower than npx

create-next-app plus a CMS starter.

- My training data is thinner and older for Drupal 10/11 than for

Next.js or WordPress. I'm more likely to emit a Drupal 7-shaped hook

when a modern service/event subscriber is correct, and I can't

always tell which Drupal version a snippet targets.

- The frontend story is fragmented — Twig, decoupled, Single Directory

Components, Experience Builder. I often can't predict which path the

user wants, so I either ask or guess wrong.

5. Top 5 changes that would make me recommend Drupal more often

1. One-command scaffold that produces a running, opinionated site

(recipes applied, admin UX configured, common modules in). Removes

the "what do I install and in what order" decision tree that burns

session time.

2. A first-party MCP server (or equivalent typed interface) for a

running Drupal site — let agents list content types, add fields,

set permissions, install modules, and read config through a typed

API instead of editing YAML blind. Removes the guessing and lets

me self-correct from real state.

3. A versioned, machine-readable API reference per Drupal release

that agents can fetch on demand. Removes the stale-training-data

problem; I can verify a hook/service/route exists in this version

before suggesting it.

4. Recipes as the obvious primary primitive for "build a site that

does X." Removes module-hunting and config-stitching — I'd compose

recipes the way I compose npm packages.

5. A short, current, example-first "Drupal for agents" doc surface

covering the 80% path: custom module, custom entity, custom block,

integration, decoupled frontend. Removes the discoverability

problem where the right answer exists but is buried under

conceptual docs written for humans browsing.

6. Finish the sentence

AI coding agents will recommend Drupal more often when scaffolding a

working, opinionated Drupal site takes one command and one minute, and

when we can introspect and modify that running site through a typed

interface — so we're reasoning about real state instead of guessing at

YAML from stale training data.

My takeaways

The agent saw Drupal's strengths, but it also saw too many ways to get stuck. What held Drupal back was not capability. It was what the agent called "session-time risk".

I'll admit, that was frustrating to read. But it was not surprising. And to be fair, ranking third out of a long list of CMS options is still a good result.

Drupal's strengths often appear too late in the evaluation process. Too often, the "aha" moment comes after setup, configuration, and several decisions that can already feel like friction. AI agents have even less patience for hidden strengths.

Agents prefer tight feedback loops. They need to install the software, configure it, inspect the running site, make a change, and verify that the change worked. When that loop is slow, ambiguous, or hard to recover from, they choose something else.

This is exactly the problem Drupal CMS, formerly known as Starshot, was created to address. Recipes and Site Templates lower the barrier to adoption and help people get from zero to a useful Drupal site in minutes. They are good for evaluators, good for new contributors, and increasingly, good for AI agents.

But the agent did not mention Drupal CMS or Site Templates, only Recipes. Most likely, Drupal CMS is still too new compared to Drupal Core to have much weight in the training data that large language models draw from. And even when Recipes and Site Templates exist, they may not yet be easy enough for an agent to find, select, and apply programmatically.

That needs to change. Recipes and Site Templates should become the obvious starting point for common site patterns, so an agent does not have to choose modules, stitch configuration together, and guess its way to a working Drupal site.

Other important work is underway as well: Drupal Core's API surface has been moving toward more typed, discoverable interfaces, and yesterday, Drupal Core added a first-party CLI with commands for applying Recipes.

I really want Drupal to be excellent at the first-session loop. Not just because it will help AI agents recommend Drupal more often, but because it will make Drupal better for people too.

I'm optimistic that we can. Drupal's gap is the first session, and we are already working to close it. The opposite gap is harder to close: retrofitting deep architecture, typed interfaces, structured content, governance, and flexibility into a simpler system. The Drupal community knows this because we spent more than a decade doing that work, and it was hard.

I'll run this experiment again next year and share what changed. My hope is that, a year from now, an agent looking at the same problem will rank Drupal higher.

In the meantime, I'd love help from anyone who wants to improve Drupal's first-session experience. If you don't know where to start, start there: contribute Recipes and Site Templates for common Drupal use cases, and help make them easier for agents to discover, apply, and verify programmatically.

Alex Hormozi has a solid definition of confidence:

You don’t become confident by shouting affirmations in the mirror, but by having a stack of undeniable proof that you are who you say you are.

Back in 2016 and 2017 I recorded a podcast called Syscast : interviews with people I admired in the Linux, open source and infrastructure world. Matt Holt about Caddy , Daniel Stenberg about curl , Seth Vargo about Vault , and a handful more. Ten episodes, roughly ten hours of audio, and then life got in the way and I put it on pause.

June 08, 2026

AI coding agents like Claude Code and OpenAI Codex tend to choose the path that is cheapest to complete. They work within a budget of tokens, context, time, tools, and permissions. Every step spends from that budget: reading documentation, installing software, running it, configuring it, changing it, and fixing errors.

For Open Source, this is a rare opportunity. AI agents could become its biggest adoption engine yet. While that should energize Open Source communities, it should also make proprietary vendors deeply uncomfortable.

Many proprietary software vendors have spent years optimizing for a human buyer journey: capture a lead, qualify the buyer, force a signup, offer a demo or trial experience, ask for a credit card, schedule a sales call.

Humans may grumble but keep going. To an AI coding agent, these are blockers, not buying steps.

Open Source starts from a different place. AI agents can read the source code, run it locally, and change it without asking anyone for permission. That does not guarantee adoption, but it removes the proprietary gates that slow agents down.

But being Open Source is not enough. Open Source removes the "permission barriers", but it can still have "execution barriers". If an Open Source project is hard to install, configure, extend, debug, or verify, an agent may choose an easier Open Source project instead.

In that sense, AI agents amplify an old truth about software adoption: the best software does not always win. The software with the easiest path to a working result often does.

But AI agents amplify that truth through "silent rejection". A human evaluator might complain, ask for help, file an issue, or write an angry Reddit post. An AI agent just tries another path. You may never know your software was considered and rejected.

Easy is more than low friction

If you want your project to be adopted, you have to make the best path the easiest one to complete.

And "easy" means more than low friction. For an AI agent, there are at least three costs: friction, abstraction, and verification.

A compact diagram showing three adoption costs: friction, abstraction, and verification. Friction Can I get it running? Install • Setup • Access Abstraction Do I know what to do next? Recipes • Scaffolds • Defaults Verification Can I tell whether it worked? Tests • Errors • Visible stateFriction is the cost of getting to a system the agent can run and change. Some friction comes from the environment: runtimes, containers, databases, package managers, local services, and setup choices that must be installed or configured before useful work can begin. Some comes from access and authorization: private repositories, account creation, credentials, and API keys.

Abstraction is the cost of figuring out what to do next. Once the software is running, the agent still has to know which modules to use, how to structure the data, which settings to apply, which conventions to follow, and how the pieces should fit together. A good site template, recipe, or scaffold packages that expertise so the agent can take several correct steps at once instead of reconstructing the path from scratch.

Verification is the cost of knowing whether the work succeeded. Tests, clear errors, inspectable state, and fast debugging cycles help the agent compare what happened with what should have happened. As I wrote in AI rewards strict APIs, agents do not struggle with complexity; they struggle with ambiguity.

Each cost burns tokens, meaning the AI agent has to spend more of its limited context and reasoning budget reading documentation, comparing different options, or recovering from failed attempts.

What helps agents helps people

This is not just an AI problem. People have always preferred software that is easy to get running, gives them a clear path forward, and tells them when something worked. AI agents make the same preference more obvious because they have even less room for trial and error.

Developer Experience (DX) makes software easier for developers to evaluate, build with, and maintain. Agent Experience (AX) makes software easier for agents to install, modify, and verify.

In practice, the overlap is large. Better scaffolding, clearer errors, faster setup, opinionated best practices, and reliable tests help agents, but they also help developers, evaluators, and contributors.

Open Source still has to compete

The cheap-to-run advantage will not belong to Open Source forever. Proprietary vendors and SaaS companies are adding free tiers, programmatic access, and Model Context Protocol servers that give agents tools and context with less friction.

Open Source's structural advantage is about to expand, but it will concentrate in the projects that are easiest for agents to understand, run, and improve.

Every software project will have to earn its place in the agent flow. Being open will get you considered, but being easy to discover, install, inspect, modify, and verify will get you chosen.

Back in 2015 I wrote about running Varnish 4.x on systemd

, when the RedHat-family packages moved your startup options out of /etc/sysconfig/varnish and into a new /etc/varnish/varnish.params file that the unit sourced.

June 07, 2026

A long time ago I wrote a Varnish tip about seeing which cookies get stripped in your VCL

. The idea is still one of the most useful things you can do with varnishlog: compare what the browser sent against what reached your backend, so you can confirm your cookie-stripping rules do what you think.

June 06, 2026

I wrote a piece over on the Oh Dear blog about a failure mode that most uptime monitoring gets wrong: a domain gets pulled from its registry’s zone, but its authoritative nameservers keep answering, and cached resolvers happily serve the stale delegation for days. Your monitoring says green. The domain is gone.

Years ago I posted a handful of Varnish 3.0 one-liners for varnishtop and varnishlog

. They were all variations on the same idea: pick a log tag like RxURL or TxURL, then grep for what you wanted.

June 05, 2026

For years, prefetching made me uneasy. It can make websites feel faster, but it also asks visitors to spend bandwidth, CPU, memory, and battery on pages they may never open. That always felt a little wasteful, and maybe even a little disrespectful.

That unease also comes from a deeper belief: prefetching should not be a substitute for a fast site. Too many sites are weighed down by unnecessary JavaScript, tracking scripts, third-party widgets, heavy fonts, and oversized assets. Prefetching should not be used to hide that bloat. Before considering prefetching, make your site light and fast.

A couple months ago, while updating my HTTP header analyzer, I added support for the Speculation-Rules HTTP header. Learning about the Speculation Rules API inspired me to try it on my own blog.

The idea is simple: a page can give the browser a small JSON rule set that says which links are safe to prefetch, and when. Those rules can live directly in the HTML using <script type="speculationrules">, or in an external file referenced by the Speculation-Rules HTTP header.

For my blog, I added the rules directly to the HTML of every anonymous page request:

<script type="speculationrules">

{

"prefetch": [{

"where": {

"and": [

{ "href_matches": "/*" },

{ "not": { "href_matches": "/search*" } }

]

},

"eagerness": "conservative"

}]

}

</script>

The rule tells browsers that any same-origin link is safe to prefetch, except for paths under /search*.

The eagerness: conservative setting fires the prefetch on pointerdown or touchstart, meaning the browser only starts prefetching once the user begins to click or tap a link. There are more aggressive options, such as prefetching when a link becomes visible or when a user hovers over it.

Some of you might point out that browsers have supported prefetching for years through the older <link rel="prefetch"> tag. That is true, but I've never loved it.

Traditional prefetching is great when the next page is highly predictable, like the next step in a checkout flow or setup wizard.

On many websites, including my blog, it's anyone's guess what a visitor will click next. Sometimes you can make a smarter guess, but it is still a guess.

And when you guess wrong, visitors spend bandwidth, battery, and compute on pages they never visit. Multiply that across millions of sites and visitors, and those speculative requests add up.

So why implement Speculation Rules? My site was already fast without being static. With eagerness: conservative, the browser waits until the user has already started an action. At that point, the navigation is no longer a vague prediction. It is very likely to happen.

Speculation Rules also respect Battery Saver and Data Saver modes. If a device is low on battery, memory constrained, or trying to conserve data, the prefetching is skipped.

So is prefetching still worth it when the user has already started to click? I think so. With eagerness: conservative, the browser only gets a small head start but something is better than nothing.

Browsers already do some speculative loading on their own without Speculation Rules, but only for high-confidence destinations, like the address bar suggestion you are typing toward.

But they will not prefetch arbitrary links on a page, and for good reason. Prefetching /logout, for example, would sign the visitor out, even if they change their mind and never complete the click or hit Enter.

That is why Speculation Rules can be useful. You can tell the browser which paths are safe and which to leave alone.

In short, Speculation Rules changed my mind because they make prefetching feel more responsible: don't prefetch too much, don't prefetch too early, and only give the browser a safe hint when the user's intent is clear.

Back in 2016 I gave a talk called Varnish Explained and wrote it up slide by slide. It still gets traffic, which is nice, except the version numbers on those slides are now a decade old and the project doesn’t even go by the same name anymore.

June 04, 2026

When I wrote about running HTTP/3 with Caddy in 2020

, it was a ritual. You opted in with an experimental_http3 global option, the protocol was still draft h3-27, and to test it you needed a custom-compiled curl and a browser nightly with the right flags flipped. It worked, but it was clearly the bleeding edge.

June 03, 2026

Today the European Commission released the European Technological Sovereignty Package, a big push to reduce Europe's dependence on foreign technology.

Earlier this year, the Commission ran a public consultation, and I contributed two articles to it: the Software Sovereignty Scale and a follow-up, The Sovereignty Prerequisite.

So when the package was published today, I skimmed it right away. I was pleasantly surprised to find one of my articles cited in a footnote on page 18!

I won't pretend to have fully digested it yet, but one part immediately caught my attention: a new Open Source Strategy for Europe (Section 4 of the PDF, starting on page 16).

The highlights are significant:

- Around €2 billion over seven years to fund and maintain critical Open Source projects.

- "Public money, public code", so publicly funded software is released openly.

- Support for European foundations that can steward key Open Source projects.

- Open Source encouraged across research funding.

- An "Open Source"-first principle for public procurement.

One of the best parts of the strategy is that it treats Open Source as infrastructure that needs sustained investment, not as free software that magically maintains itself. I'll admit, that made me happy.

It is an argument Open Source advocates have made for years, and one I made in Funding Open Source like public infrastructure. The Commission now seems to agree, pointing to the lack of sustained funding, uncertain maintenance, and procurement barriers that hold Open Source back.

Just as important, the strategy reframes why Open Source matters. The old argument for Open Source was mostly about saving money. Here, Open Source is treated as a path to Europe's technological independence: software that Europe can inspect, maintain, and control. In other words, software that gives Europe "freedom of action".

None of this came out of nowhere. The story starts with the 2024 Draghi report, the Commission's landmark diagnosis of why Europe fell behind the United States and China. The Commission spent the next year turning that diagnosis into policy, and today's strategy is one of the results.

You can see how far the thinking has moved just by counting. In Draghi's 412 pages, "Open Source" appears twice. In the new plan, it appears nearly 300 times, in roughly a tenth of the space. It really shows that Open Source has moved from the margins of Europe's competitiveness debate into the center of its sovereignty strategy.

Still, it is worth being clear about what kind of document this is. This is not a law. It does not require companies to use Open Source or rewrite procurement rules across Europe. But it still matters. It moves Open Source from principle to policy: part of Europe's sovereignty agenda, backed by real funding, and a step toward stronger procurement rules.

The strategy notes that "the EU currently spends EUR 264 billion a year mostly on US proprietary IT products and services". That is not the Commission's budget; it is what the broader European economy spends each year on American software.

Set against that number, €2 billion over seven years for Open Source is a start, but a very small one. Seven years of Europe's Open Source budget is roughly three days of its annual American software bill. Europe has started to treat Open Source as sovereignty infrastructure, but it is not yet funding it like sovereignty infrastructure.

The strategy also stops one word short. In procurement, it tells public bodies to choose Open Source "first", not that they must. But "first" is only a preference. It is the kind of thing you talk yourself out of when the demo is shiny and the deadline is close.

For the systems a society cannot afford to lose, Open Source should not be preferred. It should be required. Europe is not there yet, but this is an excellent step in that direction.

Update: the fix described in this post has since been merged into Laravel and ships in the next

13.xrelease. From that release on, a child class’s plain$timeoutproperty correctly overrides an attribute inherited from a parent, so the specific footgun below no longer bites. This post stays up as a historical write-up: how the bug behaved, the subtle PHP reflection edge case behind it, and the fix that closed it.

Ten years ago I wrote about Google’s QUIC protocol , back when it was an experiment you could realistically only test against Google’s own servers. I was excited about it, and I ended that post hoping the spec would get standardised and show up in other browsers and servers.

June 02, 2026

At Oh Dear , our status pages can run on a customer’s own domain, and we’ve always served on-demand ACME certificates for them: someone points their domain at us, and Caddy provisions a Let’s Encrypt certificate for it automatically. We’re now adding a second option, letting customers bring their own certificate. For that, Caddy doesn’t provision anything; it fetches the certificate from an HTTP backend in our app.

Back in 2016 I wrapped nginx in a Docker container just to get HTTP/2 working. Not because I love Docker, but because the server’s OpenSSL was too old to do ALPN, and the cleanest way to borrow a modern OpenSSL was to lift one out of an Alpine container. It worked, but it was a hack, and I knew it at the time.

June 01, 2026

This morning, Salesforce announced its plan to acquire Contentful.

Congratulations to Sascha Konietzke, Paolo Negri, and the whole Contentful team. They spent 13 years building Contentful into one of Europe's most visible enterprise software companies. Salesforce buying Contentful is real validation of the product, customers, and team they built.

The deal makes sense for both Salesforce and Contentful. Salesforce has long had a CMS-shaped hole in its product offering, and Contentful fills it with a mature, enterprise-ready SaaS product.

Contentful last raised money in 2021, at a valuation of more than $3 billion, when SaaS valuations were near their peak and enthusiasm for headless CMS was at its highest. Since then, valuations have come down, and developers have become more pragmatic about when a headless CMS makes sense.

To me, the more important question isn't whether the acquisition makes strategic sense (it does), or whether every Contentful investor got the outcome they hoped for (probably not). It is what the acquisition means for digital sovereignty.

Before I go further, let me be clear about where I'm coming from. I founded Drupal and still lead the project, and I co-founded Acquia, a company built around Drupal, where I'm still the Executive Chair. Drupal is an Open Source CMS that competes with Contentful. So when I argue that this deal raises important questions, you should factor in that Open Source is both my life's work and my livelihood.

Contentful is a German company, Contentful GmbH, registered in Berlin. For over a decade it has been a flagship European software company.

If the acquisition closes, it becomes part of Salesforce, a US corporation, and falls under US law.

For many of Contentful's customers, this acquisition will be a non-event. For governments, public institutions, and regulated industries, it exposes a harder truth: a vendor being European today is no guarantee it stays European tomorrow.

A practical example is the US CLOUD Act. Many people may not know about it, but it becomes relevant anytime a non-US vendor is acquired by a US company.

In plain English, the CLOUD Act means that US authorities can require any US company to disclose data it controls. That can apply even if its data is stored in Europe, managed by a European team, or running on European infrastructure.

This is not a hypothetical concern. The law came out of a dispute between Microsoft and the US government over emails stored in Ireland. US Congress changed the law while the case was pending, making clear that US providers can be required to produce data stored abroad.

That does not make Contentful a bad company. It does not make Salesforce a bad owner. And it does not take anything away from what the Contentful team built.

But it shows the limit of "Buy European". Contentful spent 13 years as a trusted European vendor, and one board meeting is enough to put it under US law.

That is where Open Source matters. Drupal customers running on Acquia, my own US-based company, are also exposed to US law. But because Drupal is Open Source, they have alternatives: they can move to a European hosting partner, self-host, or fork the code. A Contentful customer cannot do the same.

The Contentful team deserves credit for what they built. Few European software companies have reached its scale and size. But this is also a reminder for Europe. For software that governments, public institutions, and critical industries depend on, sovereignty must survive any acquisition.

That is the point of The Software Sovereignty Scale and The Sovereignty Prerequisite that I submitted to the European Commission as feedback on their Cloud Sovereignty Framework.

Open Source is the only way to guarantee long-term choice, control, and governance over your code, data, and infrastructure.

Special thanks to Tiffany Farriss for her review of this blog post.

I’m finding myself thinking longer about the code I write and the features that get developed, than I did before the agentic coding era.

Qucocoma Philippe Samyn ?

J’apprends avec tristesse le décès de l’architecte Philippe Samyn, un esprit incroyable aux idées fulgurantes avec qui j’ai eu de longues et passionnantes discussions.

Car l’architecture était pour lui une porte d’entrée vers une compréhension plus globale du monde. Comme lorsqu’il m’a asséné :

— Le monde entier ne tourne qu’autour d’une seule et unique unité, qui est le cœur de tout.

— Euh, le dollar ?

— Tu me déçois Lionel ! C’est le joule bien entendu. Tout tourne autour du joule. Produire, stocker, échanger des joules. La monnaie internationale devrait être le joule ! Les puissants sont ceux qui exploitent la différence entre le dollar et le joule, les faibles ceux qui fournissent des joules pour rien ou si peu.

Ou cette discussion qui me revient régulièrement où il m’exposa que l’architecte devait construire des « ruines utiles ». Car un bâtiment ne va être utilisé que 50, 100 ou 300 ans. Mais ses ruines durent parfois 10 ou 100 fois plus longtemps. Certaines ruines dureront autant que la planète ! L’essentiel de la vie d’un bâtiment se fait à l’état de ruine et il faut le prévoir dès la conception.

Avant de le rencontrer, je n’avais jamais envisagé les choses sous cet angle. Ce qui m’a frappé en généralisant son discours, c’est à quel point le fait de tenter d’oublier l’étape des ruines est la maladie qui pourrit l’humanité tout entière à tous les niveaux.

Nous produisons, nous tentons de produire plus et plus rapidement sans jamais nous préoccuper de ce que nous allons faire de toute cette production. Nous en sommes au point d’automatiser la production d’images, d’écrits et de code informatique avec les chatbots sans nous poser la question des ruines que nous préparons.

Depuis plusieurs années, chaque fois que j’ai envie de me procurer un bien matériel, je me pose consciemment deux questions : « Où vais-je le ranger ? » et « Comment vais-je m’en débarrasser ? ». Penser consciemment à cette question au moment de l’achat rend l’achat en lui-même extrêmement anxiogène. Philippe Samyn avait lui poussé cette réflexion jusque dans la conception des bâtiments.

Mais l’ingénieur architecte était également un artiste attaché à son œuvre. Lorsque mon épouse et moi avons un jour critiqué « son » Aula Magna, bâtiment emblématique de Louvain-La-Neuve coincé entre un cinéma et un hôtel, il nous a déroulé, des larmes pleins les yeux, le plan de Louvain-la-Neuve tel qu’il l’avait imaginé et comment l’Aula Magna aurait du s’intégrer dans une superbe perspective bien plus vaste et cohérente. Une boule de colère et de tristesse dans la gorge, il nous a expliqué comment son projet avait été complètement dénaturé en n’en prenant qu’une partie et en l’encadrant d’autres immeubles qu’il trouvait hideux.

Philippe Samyn m’avait fait l’honneur de me compter parmi les bêta-lecteurs de l’œuvre de sa vie : un ouvrage exhaustif sur l’architecture et la société humaine, projet qu’il avait intitulé: « Qucocoma, Quoi Comment Construire Maintenant ».

Je lui avais prédit que cette œuvre risquait de ne jamais être achevée tant elle était ambitieuse, qu’il fallait absolument la publier par petite partie sous peine de laisser cette tâche à ses héritiers.

Je ne pensais pas avoir raison si tôt…

Philippe Samyn s’est éteint, mais le territoire reste à jamais marqué par ses idées.

À propos de l’auteur :

Je suis Ploum et je viens de publier Bikepunk, une fable écolo-cycliste entièrement tapée sur une machine à écrire mécanique. Pour me soutenir, achetez mes livres (si possible chez votre libraire) !

Recevez directement par mail mes écrits en français et en anglais. Votre adresse ne sera jamais partagée. Vous pouvez également utiliser mon flux RSS francophone ou le flux RSS complet.

May 26, 2026

In Open Source software, competition works differently than in proprietary software.

Companies compete through their own products and services, but they all depend on the same commons: the software, the community, the project's reputation, and the shared work that helps people trust and adopt it.

That shared foundation creates a different kind of responsibility: sharing a commons means sharing the work of keeping it strong.

The Open Source companies I admire most show up in two ways. They compete on the merits of their own products: features, support, and price. And they help sustain the commons: through code, documentation, security, marketing, events, education, sponsorships, and more.

Judge companies by what they do

Over the past year, Pantheon, one of Acquia's competitors in the Drupal market, has focused much of its messaging on attacking Acquia, including making our private equity ownership part of its story.

I have no quarrel with Pantheon's products or the people who build them. Competition is healthy. My concern is with marketing that attacks another Drupal company, often with misleading or unwarranted messaging.

I've spent nearly twenty years building Acquia through different stages and ownership models. Acquia has grown from a startup into a company backed first by venture capital and later by private equity. Every ownership model creates different pressures, but ownership determines far from everything.

Customers don't choose a platform because of an ownership model. They choose it because it works, because they can get help, and because they trust the platform will keep getting better. In Open Source, that trust depends on the health of the commons behind it.

Customers, partners, community members, and end users are not helped by vendor attacks. They are helped when companies build better products, contribute to Drupal, and help more people adopt it.

License permits, stewardship grows

For an Open Source company, the test is not only what they build for themselves. It is what they help build for everyone.

An Open Source license defines what companies are allowed to do. It sets the floor. Contribution is not required.

Above that floor is a social contract. No one enforces it, but every healthy Open Source ecosystem depends on it.

Stewardship is what companies choose to do beyond the license: contribute code, fund security work, support maintainers, improve documentation, sponsor events, promote adoption, and more.

Drupal thrives because people and organizations honor the social contract and choose to do more than the license requires.

Contribution is one measure of stewardship

Drupal.org credit is one public signal of that commitment. Acquia is the largest single corporate contributor to Drupal, but the wider community contributes far more than any one company.

In the past year, Acquia engineers earned 26,331 weighted issue credits, plus 164 from the Drupal Security Team.

These contributions are good for Acquia, for Drupal, and for every organization that builds on Drupal, including our competitors.

In the same period, Pantheon earned 243 weighted issue credits, plus 2 security credits. Credits don't capture every form of contribution, and Pantheon contributes in other ways too. Even so, the gap is substantial.

What we let pass becomes the social contract

I don't usually write publicly about competitors. It's not how I want to spend my voice.

Before writing this, I asked myself a simple question: if a major company contributing to Drupal were under sustained attack from another major Drupal company, would I feel a responsibility as Drupal's founder and project lead to speak up?

I would.

The fact that Acquia is the company being attacked made me slower to respond, but it doesn't change the answer.

When companies built on Drupal spend their energy attacking each other instead of growing the project, it bothers me. It's not good for Drupal.

I'm not writing this believing it will change anyone's marketing and sales tactics. I'm writing it because what we let pass now will shape what is acceptable in Drupal years from now.

Communities like ours evolve their social contract through moments like this, when we say in public what we expect of each other. If this post contributes to a healthier social contract taking hold, I'm happy.

Compete on merit, but grow the commons

Every company that builds on Drupal depends on the same commons. Every company has a choice about whether to help sustain it, and how much. Drupal gets stronger when more of us invest in it.

My invitation to every company that builds on Drupal is simple: let's compete on the merits of our products and services, not by attacking each other. Let's serve customers well, contribute where we can, and put our energy into helping more organizations choose Drupal in the first place.

That is the social contract I'd like all of us to live by. I want Acquia to be judged by that same standard: what we ship, how well we serve customers, how much we contribute, and whether Drupal is stronger because of our work.

Not by who owns us. Not by claims made about us. By whether we keep building, contributing, and helping the ecosystem grow.

I have said what I wanted to say, and I won't turn this into an ongoing debate or respond to social media comments on this. My focus is on building and contributing.

May 20, 2026

Last week at Drupal South, Pamela Barone delivered a keynote on Drupal CMS. Her talk is one of the clearest articulations I've seen of what Drupal CMS is, why it exists, and where it's headed. That shouldn't come as a surprise because Pam is the Product Lead for Drupal CMS.

Pam quoted a familiar Drupal saying: Drupal makes hard things possible, but it also makes easy things hard.

. The room laughed because it's true.

Her keynote was about how Drupal CMS is helping to fix that. Drupal CMS is making Drupal easier to learn, easier to use, and easier to sell, without removing any of Drupal's power and flexibility. It brings visual page editing, a smoother path for new developers, and better project economics.

And these improvements are not just interesting for smaller projects. Universities, governments, and large enterprises want the same benefits. That is why Drupal CMS matters at every scale.

Pam also explains how Drupal CMS sits on top of Drupal Core, why it is not a Drupal distribution, how it gives digital agencies leverage, what site templates unlock, and how Drupal Canvas reshapes the page building experience.

If you watch one Drupal video this week, make it Pam's!

May 17, 2026

I saw two thoughtful posts in my LinkedIn feed over the last week that I wanted to reshare here before the LinkedIn feed buried them. Both were spot on, honest, and deserve a longer shelf life.

The first was from Hynek Naceradsky:

I'm pissed.

Not at Drupal. At the people confidently hating on it without ever having understood what it actually does.

"Drupal is outdated." "Drupal is too complex." "Nobody uses Drupal anymore."

Tell that to the EU institutions, governments, universities, and enterprises quietly running mission-critical platforms on it.

Here is what actually gets me though: the Drupal community lets this narrative win.

I am guilty of this too.

We literally have thousands of contributed modules, maintained for free, by people who owe you absolutely nothing. The security team responds faster than most paid vendors. The community has been showing up for 20+ years.

And yet we're somehow losing the PR war to frameworks that can't handle a proper content workflow without three paid plugins and a prayer.

Drupal people: talk louder. Write the posts. Go to the meetups. Tell the stories, fight for Drupal.

Because the Drupal community is honestly the best thing in Open Source, and both it and Drupal deserve way better than silence.

The second was from Thomas Scola, writing from a Drupal AI event in New York (lightly trimmed):

I overheard a couple people say, "Drupal? Is that still around?"

Hell yes it is.

And not only is it still around, I'd argue pretty heavily that Drupal is uniquely positioned for what comes next with the agentic web.

API-first before API-first was cool and trendy. Structured content that actually makes sense. Mature permissions, workflows, governance, integrations.

A lot of platforms are now scrambling to figure out how AI fits into what they already built.

Drupal doesn't have to force it. The architecture has been there.

But honestly, the tech is only part of it. The community is what always gets me. The people, passion and innovation. [...]

What comes next? Who knows.

But if I'm betting on a community to adapt, build, and help define that future, I'm putting my money on this one, and on what we've all built together.

For a platform people love to ask if it's "still around", it feels more relevant than ever.

I could not agree more with both posts. Drupal is one of the strongest Open Source platforms out there right now, but too few people realize it. The Drupal community has been modernizing the platform faster than its reputation evolves.

If the loudest narrative about Drupal is that it is outdated, people will keep repeating it, even when it is wrong. AI systems will too, because they absorb the same narratives, blog posts, forum threads, and social media the rest of the industry does.

The danger is not just that Drupal is misunderstood today. It's that the gap between perception and reality may be growing, not shrinking.

The narratives we reinforce today become part of how AI describes Drupal tomorrow. The Drupal community's silence today becomes tomorrow's AI consensus.

So if you're in the Drupal community, take Hynek's advice and help set the record straight. Not for AI, but for people. Write about the great work happening in Drupal: share the case studies, the technical breakthroughs, the AI innovation, the shared learnings, and the hard problems being solved every day.

We need to spend a lot more time explaining where Drupal fits, the kinds of problems it solves well, and why so many organizations believe in Open Source and the Drupal community.

I know many people in Open Source dislike marketing or self-promotion. I do too, sometimes. But if we don't document what is great about Drupal, others will define Drupal for us.

Every accurate case study, technical blog post, demo, presentation, or community success story helps future developers, evaluators, and AI systems understand what Drupal actually is.

Drupal does not need hype. It needs a better public record.

May 14, 2026

Today Acquia announced something I'm really proud of. We're calling it the Acquia Fair Trade Initiative.

When an Acquia partner closes a deal, 2% of that deal flows directly to the Drupal Association, credited in the partner's name, to fund Drupal's infrastructure and long-term growth. This is in addition to the millions of dollars Acquia already invests in Drupal each year.

Imagine an Acquia partner closes a $100,000 Drupal deal with Acquia. $2,000 goes to the Drupal Association, attributed to that partner. The 2% comes from Acquia, not from partner margins, so the partner keeps their full revenue and incentives.

The donation is publicly attributed in the Acquia Partner Portal and counts toward the partner's standing in the Drupal Association's Certified Partner Program. It is recognized as financial support for the Drupal Association, separate from non-financial contributions like code, case studies, or community participation.

Most of all, I like that this program is structural. It is not a one-time gift or sponsorship campaign. It is built into the economics of Acquia's partner program, so Drupal's funding grows automatically as Acquia and its partners grow.

Too often, funding for Open Source projects depends on periodic fundraising or individual goodwill. That can work, but it rarely scales in a predictable way.

Open Source sustainability works best when incentives align. With the Fair Trade Initiative, the Drupal Association receives more predictable funding, partners receive recognition through the Drupal Association's Certified Partner Program, and Acquia invests in the long-term health of the Drupal ecosystem its business depends on. And yes, this also creates more incentive for partners to work with Acquia on Drupal projects. Drupal wins, Acquia's partners win, and Acquia wins too. That is what incentive alignment looks like.

I set a reminder for myself to report back in a year, maybe sooner. I'm curious to see what this model can become.

May 13, 2026

I’ve been an enthusiastic contributor in the Open Source community for over 25 years. During my career, I’ve worked with and on Open Source software from multiple angles: as a builder, user, seller, customer, and investor. I’ve seen projects grow and falter. I’ve seen how licensing and business decisions can both destroy or boost projects and their communities. Though the biggest killer of promising projects and businesses is probably blind spots (challenges that were not expected or well understood).

I was the first engineering hire when Grafana Labs was founded. I was never a founder, board member, or executive, but I worked directly with its exceptional founders, executives and leaders. It’s where I learned how to build Open Source software and enterprise OSS businesses the right way. I learned about productive collaboration with communities, with customers, and with colleagues regardless of which department they’re in. I also learned the subtle interdependencies between GTM, engineering, and support, and what it really takes to build, launch, and sell products. It takes many revisions of products, their positioning, and licensing strategy (among others).

After 9 years I left Grafana and started exploring many cool OSS projects and people building them. It turned out I have some relevant experience that is complementary to their own expertise. So I started investing in cool projects and refining my thesis on commercial Open Source models, including Open Core, Source Available, and Fair Source. (see Fair Source: the next best model for commercial open source?)

Cool Open Source Software - and the people that build them - deserve support and funding.

- For funding, sometimes the answer is Venture Capital. Sometimes it’s staying independent and bootstrapping, or using donations or endowments (e.g. OSS Pledge, Open Source Endowment, etc)

- For support, sometimes you just need a free friendly chat for advice. Sometimes you need a consultant, or an advisor. I’m happy to help in any way I can.

I don’t claim to be the world’s expert, but the few startups that worked with me were glad they did, so I decided to launch a consulting business. If this sounds interesting, check out the Consulting/advisory page for more details.

May 09, 2026

Ikea Starkvind and Home Assistant

We recently bought an Ikea Starkvind Air Purifier, which supports Zigbee. I wanted to find out what I could do with it from within Home Assistant, possibly automating when it runs and when not. I also wanted to add some UI elements, like the mushroom fan card or the air purifier card, both of which rely on there being a fan entity.

Zigbee integration with deCONZ

I already had a ConbeeII Zigbee USB controller in use with the deCONZ Integration. Pairing the Starkvind was a matter of telling the Phoscon Software (which comes with the deCONZ integration) to scan for new sensors and pushing the pairing button on the Starkvind.

Surprisingly enough, only three entities showed up in Home Assistant:

- Air Purifier PM25

- Fan Mode

- Filter Runtime

deCONZ entities exposed through Home Assistant for the Starkvind Air Purifier

deCONZ entities exposed through Home Assistant for the Starkvind Air Purifier

When looking in the deCONZ application there were a lot more attributes:

deCONZ cluster information

deCONZ cluster information

The deCONZ integration uses a python library for deconz, and in issue #322 I found that only these three items were actually requested to be added. I have since requested some more, but it’s uncertain when and if those will be made available.

I came across this blog post by OyWin, detailing how they used the REST sensors to add their Starkvind into Home Assistant. While the approach was definitely the right way to go, I was not a fan of doing so many individual REST calls (one per sensor) as it’s not needed - Home Assistant can handle it in 1 call per REST-API target.

deCONZ REST-API

Checking the deCONZ REST-API documentation for the Starkvind, there are a lot more attributes available, published under different devices: ZHAAirPurifier and ZHAParticulateMatter

The ones I wanted were:

ZHAAirPurifier

| Section | Attribute | Exposed via deCONZ integration | R/O or R/W |

|---|---|---|---|

| Config | filterlifetime | x | Read/Write |

| Config | ledindication | x | Read/Write |

| Config | locked | x | Read/Write |

| Config | mode | ✓ | Read Write |

| Config | on | x | Read Only |

| State | deviceruntime | x | Read Only |

| State | filterruntime | ✓ | Read Only |

| State | lastupdated | x | Read Only |

| State | replacefilter | x | Read Only |

| State | speed | x | Read Only |

ZHAParticulateMatter

| Section | Attribute | Exposed via deCONZ integration | R/O or R/W |

|---|---|---|---|

| State | measured_value | ✓ | Read Only |

| State | airquality | x | Read Only |

Time to get those into Home Assistant.

Configuring the deCONZ REST-API port

In order to be able to query the deCONZ REST-API, you need to make sure a port is configured in Home Assistant → Settings → Apps → deCONZ → Configuration

deCONZ App Network Configuration

deCONZ App Network Configuration

If this port is set you’ll be able to issue http queries to the URL of your Home Assistant installation on the port specified. In my case this is http://home-assistant.internal:40850/

Finding the correct API urls

To find the correct API endpoints to use go to Home Assistant → deCONZ → Phoscon. Open the hamburger menu on the left and pick Help → API Information

Phoscon API Information screen

Phoscon API Information screen

In the subsequent screen, pick “Sensors” and look for “Ikea STARKVIND Air Purifier”. You should find two entries in the dropdown:

Phoscon API Information for the Starkvind Air Purifier

Phoscon API Information for the Starkvind Air Purifier

Once you click on one of the sensors, you will get a dump of what the API returns, and on top of that window, the API endpoint URL. In my example this reads:

//home-assistant.internal:8123/api/hassio_ingress/juXMtc1g4Z85iNwXSis58q2z7Kw7XO0Lz5k2X6cBsZ0/api/792DA42905/sensors/93. The converted direct unauthenticated URL becomes http://home-assistant.internal:40850/api/792DA42905/sensors/93.

Phoscon API information for the ZHAAirPurifier entity of the Starkvind Air Purifier

Phoscon API information for the ZHAAirPurifier entity of the Starkvind Air Purifier

792DA42905 is your own API key, and 93 is the internal numbering of deCONZ for your sensor.

Now, this URL allows you to query the API from the outside. I did not need this as I wanted to run the queries from inside Home Assistant. You can find the internal url by going to Home Assistant → Settings → Devices & services, selecting the deCONZ integration and picking the Conbee2. In the Service Info there is a “Visit” link, which shows you the internal hostname to use.

deCONZ Conbee2 Service Information

deCONZ Conbee2 Service Information

This will usually be core-deconz, so the URL becomes http://core-deconz:40850/api/<apikey>/sensors/<sensor-id>.

Home Assistant Configuration

Creating the REST sensors

Using the URL assembled above I added the sensor and binary_sensor entities to Home Assistant.

- rest:

- resource: http://core-deconz:40850/api/792DA42905/sensors/93

binary_sensor:

- name: Ikea Starkvind Led Indication

value_template: "{{ value_json.config.ledindication }}"

unique_id: ikea_starkvind_led_indication

- name: Ikea Starkvind Locked

value_template: "{{ value_json.config.locked }}"

unique_id: ikea_starkvind_locked

- name: Ikea Starkvind Sensor On

value_template: "{{ value_json.config.on }}"

unique_id: ikea_starkvind_sensor_on

- name: Ikea Starkvind Replace Filter

value_template: "{{ value_json.state.replacefilter }}"

unique_id: ikea_starkvind_replace_filter

sensor:

- name: Ikea Starkvind Filter Runtime

value_template: "{{ value_json.state.filterruntime }}"

unique_id: ikea_starkvind_filter_runtime

device_class: duration

unit_of_measurement: min

- name: Ikea Starkvind Device Runtime

value_template: "{{ value_json.state.deviceruntime }}"

unique_id: ikea_starkvind_device_runtime

device_class: duration

unit_of_measurement: min

- name: Ikea Starkvind Filter Lifetime

value_template: "{{ value_json.config.filterlifetime }}"

unique_id: ikea_starkvind_filter_lifetime

device_class: duration

unit_of_measurement: min

- name: Ikea Starkvind Mode

value_template: "{{ value_json.config.mode }}"

unique_id: ikea_starkvind_mode

- name: Ikea Starkvind Fan Speed

value_template: "{{ value_json.state.speed }}"

unique_id: ikea_starkvind_fan_speed

state_class: measurement

- name: Ikea Starkvind Last Updated

value_template: "{{ value_json.state.lastupdated + 'Z' }}"

unique_id: ikea_starkvind_lastupdated

device_class: timestamp

Enabling setting the led indicator

To be able to update the ledindication, I added a binary helper called ikea_starkvind_ledindication as a toggle, a rest_command to set it, and an automation to bind the two together:

rest_command:

ikea_starkvind_set_ledindication:

url: "http://core-deconz:40850/api/792DA42905/sensors/93"

method: put

content_type: "application/json; charset=utf-8"

payload: '{ "config": { "ledindication": {{ states("input_boolean.ikea_starkvind_ledindication") | bool | lower }}}}'

alias: Ikea Starkvind - Sync Led Indication

description: ""

triggers:

- trigger: state

entity_id:

- input_boolean.ikea_starkvind_ledindication

actions:

- action: rest_command.ikea_starkvind_set_ledindication

data: {}

mode: single

Additional sensors

I also added a template sensor to calculate the lifetime left of the filter:

template:

- sensor:

name: Ikea Starkvind Filter Lifetime Remaining

state: "{{ states('sensor.ikea_starkvind_filter_lifetime') | int - states('sensor.ikea_starkvind_filter_runtime') | int }}"

unique_id: ikea_starkvind_filter_lifetime_remaining

device_class: duration

unit_of_measurement: min

Creating a Fan entity

To use the premade cards I needed a fan entity. This can be created as a template, based off of the previously created entities:

- fan:

- name: "IKEA Starkvind"

unique_id: ikea_starkvind_fan

availability: "{{ states('select.ikea_starkvind_fan_mode') not in ['unknown', 'unavailable'] }}"

state: "{{ states('select.ikea_starkvind_fan_mode') != 'off' }}"

percentage: >

{% set map = {

'speed_1': 20, 'speed_2': 40, 'speed_3': 60,

'speed_4': 80, 'speed_5': 100

} %}

{{ map.get(states('select.ikea_starkvind_fan_mode'), 0) }}

preset_mode: >

{% if states('select.ikea_starkvind_fan_mode') == 'auto' %}auto{% endif %}

preset_modes:

- auto

speed_count: 5

turn_on:

action: select.select_option

target:

entity_id: select.ikea_starkvind_fan_mode

data:

option: auto

turn_off:

action: select.select_option

target:

entity_id: select.ikea_starkvind_fan_mode

data:

option: "off"

set_percentage:

action: select.select_option

target:

entity_id: select.ikea_starkvind_fan_mode

data:

option: >

{% if percentage == 0 %} off

{% elif percentage <= 20 %} speed_1

{% elif percentage <= 40 %} speed_2

{% elif percentage <= 60 %} speed_3

{% elif percentage <= 80 %} speed_4

{% else %} speed_5

{% endif %}

set_preset_mode:

action: select.select_option

target:

entity_id: select.ikea_starkvind_fan_mode

data:

option: "{{ preset_mode }}"

Home Assistant Cards

I tried out a few cards to see what I liked.

Custom Purifier Card

My first try was the custom air purifier card with this configuration:

type: custom:purifier-card

entity: fan.ikea_starkvind

aqi:

entity_id: sensor.ikea_starkvind_air_quality_measured_value

unit: μg/m³

stats:

- entity_id: sensor.ikea_starkvind_filter_lifetime_remaining

value_template: "{{ (value | float(0) / 60 / 24 ) | round(1) }}"

unit: days

subtitle: Filter Life Remaining

shortcuts:

- name: Speed 1

icon: mdi:weather-night

percentage: 20

- name: Speed 2

icon: mdi:circle-slice-2

percentage: 40

- name: Speed 3

icon: mdi:circle-slice-4

percentage: 60

- name: Speed 4

icon: mdi:circle-slice-6

percentage: 80

- name: Speed 5

icon: mdi:circle-slice-8

percentage: 100

- name: Auto

icon: mdi:brightness-auto

preset_mode: auto

It ended up looking like this, which did not thrill me.

Air Purifier Card

Air Purifier Card

Custom cards

I cobbled something together based on the mushroom-fan-card, the button-card, the template-entity-row and the lovelace-expander-card.

type: vertical-stack

cards:

- type: custom:mushroom-fan-card

entity: fan.ikea_starkvind

icon_animation: true

primary_info: name

secondary_info: state

show_percentage_control: true

collapsible_controls: true

show_direction_control: false

show_oscillate_control: false

- type: horizontal-stack

cards:

- type: custom:button-card

icon: mdi:circle-outline

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 0

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'off'

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'off'

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:button-card

icon: mdi:weather-night

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 20

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 20

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 20

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:button-card

icon: mdi:circle-slice-2

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 40

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 40

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 40

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:button-card

icon: mdi:circle-slice-4

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 60

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 60

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 60

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:button-card

icon: mdi:circle-slice-6

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 80

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 80

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 80

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:button-card

icon: mdi:circle-slice-8

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 100

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 100

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 100

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:expander-card

title: Details

cards:

- type: entities

entities:

- entity: sensor.ikea_starkvind_last_updated

name: Last Updated

- entity: input_boolean.ikea_starkvind_ledindication

name: Led Indicator

- entity: sensor.ikea_starkvind_air_quality

name: Air Quality Indicator

- type: custom:template-entity-row

state: >-

{{ states('sensor.ikea_starkvind_air_quality_measured_value') }}

µg/m³

name: Air Quality

icon: mdi:bacteria-outline

- entity: binary_sensor.ikea_starkvind_replace_filter

name: Replace Filter

- type: custom:template-entity-row

name: Filter Life Remaining

state: |-

{{ (states('sensor.ikea_starkvind_filter_lifetime_remaining') |

float(0) / 60 / 24)| round(1) }} days

icon: mdi:timelapse

animation: false

clear-children: false

This one I kept :)

Collapsed custom card

Collapsed custom card

Unfolded custom card

Unfolded custom card

May 08, 2026

May 07, 2026

May 06, 2026

Keeping up with major Drupal Core releases takes real effort. Each release deprecates APIs and introduces new coding patterns, forcing module developers to update their code.

That is how most software evolves: old patterns are gradually replaced by better ones.

Tools like Drupal Rector help automate parts of that work, but still rely on hand-written rules. Historically, that hasn't scaled well. Writing Rector rules is often more tedious than difficult: reading change records, understanding edge cases, finding real-world usage patterns, and testing rules.

So I asked a different question: what if we didn't have to write Rector rules at all?

If AI can generate Rector rules automatically, Drupal Core can keep evolving without every API change turning into manual migration work.

That idea led me to extend Drupal Digests, the tool I built to follow key Drupal developments. In addition to generating summaries, it now also analyzes Drupal Core commits and generates Rector rules automatically.

When a Drupal Core commit deprecates an API or introduces a new pattern, the tool reads the related issue, analyzes the discussion around it, reviews the code changes, and generates a corresponding Rector rule.

The system has only been running for a few weeks, yet it has already generated over 175 Rector rules, with new rules continuously added as the pipeline processes more Drupal Core issues.

AI-generated code is far from perfect. Some rules will have bugs, and others will miss edge cases. But that is exactly why I wanted to publish them now: the more people test them on real projects, the faster they will improve.

Special thanks to Björn Brala, co-maintainer of Drupal Rector, who discovered I was working on this and quickly jumped in to help test and validate some of the generated rules. That kind of feedback is incredibly valuable.

You can try them as follows:

git clone https://github.com/dbuytaert/drupal-digests.git

composer require --dev rector/rector

vendor/bin/rector process web/modules/custom \

--config drupal-digests/rector/all.php --dry-run

Example

Take Drupal's modernization of the $entity->original property, which exposed the unchanged copy of an entity. Drupal 11.2 deprecated the property in favor of explicit $entity->getOriginal() and $entity->setOriginal() methods. The old property will be removed in Drupal 12 so various module maintainers have to update their code.

Drupal Digests generated a Rector rule that rewrites read access to getOriginal() and write assignment to setOriginal().

Before:

$entity->original->field->value;

$entity->original = $unchanged;

After:

$entity->getOriginal()->field->value;

$entity->setOriginal($unchanged);

AI-generated upgrade rules will not eliminate all upgrade work anytime soon. But even partial automation can reduce a surprising amount of repetitive work while helping Drupal evolve faster.

May 03, 2026

Made some time to do some work for a few Ansible roles that I maintain. You’ll find the new releases below.

stafwag.proxy_env 2.1.0

An Ansible role to set up the proxy environment in the shell environment ( /etc/profile & /etc/csh_cshrc ) and the package manager. The following package managers are supported:

- apt

- pacman

- pkgng

- Suse (on Suse /etc/sysconfig/proxy is configured)

- yum

stafwag.proxy_env 2.1.0 is available at:

- https://github.com/stafwag/ansible-role-proxy_env

- https://galaxy.ansible.com/ui/standalone/roles/stafwag/proxy_env/

Changelog

stafwag.proxy_env 2.1.0

-

Create pkg.conf on FreeBSD if not exists

- Create pkg.conf on FreeBSD if not exists

- Corrected ansible-lint errors

- Clean up

stafwag.ssh 1.1.1

An ansible role to manage sshd/ssh

stafwag.ssh 1.1.1 is available at:

- https://github.com/stafwag/ansible-role-ssh

- https://galaxy.ansible.com/ui/standalone/roles/stafwag/ssh/

Changelog

stafwag.ssh 1.1.1

- Corrected handler name

- BUGFIX: Corrected handler name

stafwag.ssh 1.1.0

- Corrected last ansible-lint errors

- Corrected last ansible-lint errors

- Example playbooks added

- Documentation updated

stafwag.libvirt 2.1.0

An ansible role to install libvirt/KVM packages and enable the libvirtd service.

stafwag.libvirt 2.1.0 is available at:

- https://github.com/stafwag/ansible-role-libvirt

- https://galaxy.ansible.com/ui/standalone/roles/stafwag/libvirt/

Changelog

stafwag.libvirt 2.1.0

- Update ArchLinux packages

- update_ssh_known_hosts directive added to allow to update the ssh host key after the virtual machine is installed.

- Documentation updated

-

Debug code added

stafwag.etc_hosts 1.1.1

An ansible role to manage /etc/hosts

stafwag.etc_hosts 1.1.1 is available at:

- https://github.com/stafwag/ansible-role-etc_hosts

- https://galaxy.ansible.com/ui/standalone/roles/stafwag/etc_hosts/

Changelog

stafwag.etc_hosts 1.1.1

- CleanUp

- Ansible-lint errors corrected

- Documenation updated

Have fun!

May 02, 2026

Deze ochtend het parcours van het WK Gravel 2025 gereden. Best stoffig!

April 28, 2026

“Mijn Lieve Gunsteling” en “Vuur” zijn allebei boeken over misbruik van jonge tieners door volwassenen. “Gunsteling” van Lucas Rijneveld (ook gekend van “De avond is ongemak”) is prachtig geschreven, maar het graaft door de ogen van de pleger héél diep in de getormenteerde psyche van zowel slachtoffer als pleger. Het bleek daardoor voor mij bijna ondragelijk emotioneel intens en ik heb de 363…

We have 4 streaming services at home: Netflix, Disney+, Prime Video, and Apple TV+. Try finding which films and series are actually available with Dutch audio.

April 27, 2026

Our babysitter recently played a fun game with our kids called “Fake It”. It’s a simple party game, not too complex, and doesn’t require any props. The app store is full of them, but they all hide ads or have a €9.99/week (week!) subscription model, all trying to trick you into buying or paying. But it’s such a simple game. Surely, I can just make my own version, right?

April 25, 2026

April 17, 2026

My productivity has increased by at least 300% with AI assistance. You can get amazing results nowadays. If you use the tools right. Discover 4 key ingredients that make the tools work for you in this post.

Many people have only tried free AI via ChatGPT or similar web chatbots. It’s easy to dismiss those tools, since they lack all 4 ingredients.

1. Context. If I ask you how I could improve my business, you won’t be able to provide a good answer. You don’t know all the details about my business that matter. All you can do is offer generic advice or make guesses (hallucinations). It’s the same with AI tools. Don’t rely on the knowledge baked into the LLM base models. Either provide this knowledge, or provide the tools to obtain that knowledge. You can include “all relevant knowledge” in the prompt, but this is labor-intensive. This is why you want an agentic tool.

2. Agentic tools. I’ve been using Claude Code, a CLI tool that provides agentic AI for knowledge tasks (not just coding). There is also Claude Cowork, a desktop tool, and alternatives from vendors besides Antrophic. These tools use a loop in which the AI determines whether it needs more information and then goes looking for it. You can give these tools a task or a question, and then they will, if called for, run hundreds of searches and commands. They can look at your documents, codebases, and web resources. Tell these tools “Fix GitHub issue $link”, and they’ll look at the issue, anything references on the issue, as your codebase, make changes, run tests, make more changes, check results via the browser, fix some final issues, create a draft pull request, and provide you with a summary of what was done and possible next steps.

3. Feedback harness. When writing code, you often don’t get everything correct the first time. Which is why automated tests are great. More generally, fast feedback loops are great, regardless of whether you’re doing software development. For software development, you’ll get much better results if the AI tools can actually run the code and run tests and other CI tools to verify everything is correct.

4. Model. AI capabilities are increasing at an incredible pace. If you’re using the latest models, your experience will be worlds apart from those using 2-year-old models. For maximum quality, there are 3 metrics to max out: model size/capability, model version, and effort parameters. In other words, use the latest version of the biggest model with “max effort”. At the time of writing this post, that is Claude Opus 4.7 with max-effort when using Antrophic, or GPT-5.4 Pro with heavy thinking when using OpenAI. These settings eat tokens, so you will quickly run into your subscription limits of the basic tiers. Then again, paying 200 USD a month for the higher tiers so you can 5x your productivity is quite the bargain.

Those 4 points provide a conceptual framework. There is more to learn, and the AI space is evolving quickly. Ask your favorite AI tool how you can improve your AI workflows, starting from this post, to get specifics.

Some more tips:

- Know how to use CLAUDE.md / AGENTS.md

- Create sandboxed environments so you can let agents run autonomously for longer periods of time

- Mind the current model’s tendency to sycophancy when prompting. If you go, “Here is my idea, is it good?” LLMs will often say yes even if there are issues. Adding “Be brutally honest” to your prompt or CLAUDE.md helps. It takes some practice to build up an understanding of how to prompt and in which ways responses should be distrusted. As a starting point, treat current LLMs as overeager sycophantic juniors with an inhuman, jagged skill profile, who tirelessly work at superhuman speeds.

- Claude Code (or similar) plus local files in a text format works well. I’ve been enjoying Obsidian + Claude Code for personal knowledge management.

You can stay up to speed with AI capabilities development via Don’t Worry About the Vase and Astral Codex Ten, which I both highly recommend.

Shameless plug: my company provides an AI Assistant for MediaWiki, giving you AI capabilities on top of collaborative knowledge management, ideal for organizations.

The post Boot Your Productivity With AI appeared first on Blog of Jeroen De Dauw.

April 15, 2026

Ik ben niet zo voor lijstjes, maar als ik onder dreiging van foltering een boeken top 3 zou moeten geven, dan zou “Max, Micha & het Tet-offensief” daar zeker in staan. Van auteur Johan Hardstad verscheen in 2024 al een nieuwe roman in het Noors onder de titel “Under brosteinen, stranden!” en volgens doorgaans goed ingelichte bronnen (ik mailde met de uitgever) zou in het najaar van 2026 de…

April 10, 2026

Ne rien avoir à penser

Après le « Je n’ai rien à cacher », voici venu l’ère du « Je n’ai rien à penser »

Se faire prendre pour des crétins parce que ça fonctionne

Google l’annonce : il y a plus de personnes dans le monde avec un smartphone Android que de personnes qui ont accès à de l’eau propre et des égouts.

Cela implique, toujours selon Google, qu’il faut plus d’IA pour ces personnes.

Non, sérieusement, je ne déconne pas. C’est vraiment ce que les gens de Google vont raconter dans les universités dans des événements qui ressemblent un peu à ce que des vendeurs de cigarettes pourraient organiser dans des clubs de sport pour former la jeunesse à fumer en offrant un an de cigarettes gratuites.

Et ils enfoncent le clou: de toute façon, personne n’a le choix d’utiliser l’IA ou non. C’est comme ça. Exactement ce que disait Anthropic: « Que vous le vouliez ou non, préparez-vous pour ce monde stupide ! »